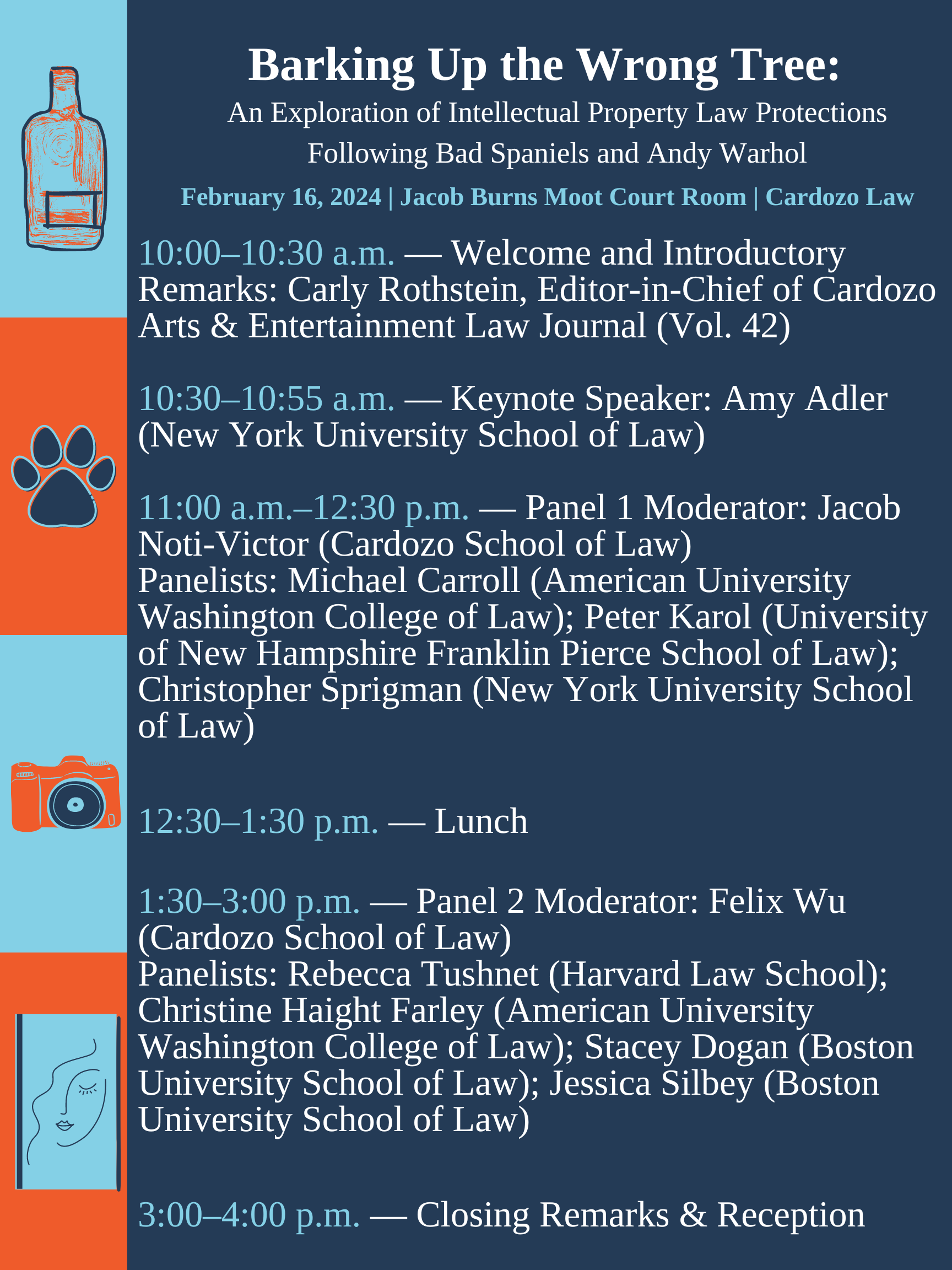

Cardozo AELJ’s Spring 2024 Symposium Explores the Implications of the Warhol and Bad Spaniels Decisions on Copyright and Trademark Law

Thank you to everyone who attended and participated in the Cardozo Arts & Entertainment Law Journal’s spring symposium, “Barking Up the Wrong Tree: An Exploration of Intellectual Property Law Protections Following Bad Spaniels and Andy Warhol.” AELJ is proud to…